Computer-based music production and composition involves the eyes as much as the ears. The representations in audio editors like Pro Tools and Ableton Live are purely informational, waveforms and grids and linear graphs. Some visualization systems are purely decorative, like the psychedelic semi-random graphics produced by iTunes. Some systems lie in between. I see rich potential in these graphical systems for better understanding of how music works, and for new compositional methods. Here’s a sampling of the most interesting music visualization systems I’ve come across.

Music notation

Western music notation is a venerable method of visualizing music. It’s a very neat and compact system, unambiguous and digital, and not too difficult to learn. Programs like Sibelius can effortlessly translate notation to and from MIDI data, too.

But Western notation has some limitations, especially for contemporary music. It doesn’t handle microtones well. It has limited ability to convey performative nuance — after a hundred years of jazz, there’s no good way to notate swing other than to just write the word “swing” at the top of the score. The key signature system works fine for major keys, but is less helpful for minor keys and modal music and is pretty much worthless for the blues.

Here’s a suggestion for how notation could improve in the future. It’s a visualization by Jon Snydal of John Coltrane’s solo in Miles Davis’ “All Blues” (I edited it a little to be easier on the eyes.)

Snydal’s visualization is more analog than digital — it shows the exact nuances of Coltrane’s performance, with subtle shadings of pitch, timing and dynamics.

MIDI sequencers suggest further improvements over standard notation. Here’s a simplified electronic music sequencer called iNudge. Play, it’s fun.

Here’s Thelonious Monk’s tune “Four In One” as shown in standard MIDI “piano roll” view. The rectangles show not only which notes are being played and when, but exactly how long they’re held. Darker red means louder, paler pink means quieter. You can also read volume off the bars along the bottom.

MIDI is a versatile and user-friendly system. It can capture your keyboard performances, you can import scores, and you can even just draw notes onto the screen directly (my preferred method.)

The Music Animation Machine has a wonderful series of videos matching MIDI piano rolls of various classical pieces with recordings of them. Here’s Bach’s infamous Toccata and Fugue in D minor.

As software gets more sophisticated in its ability to extract pitch data from actual audio recordings, you can start manipulating them with the same ease as MIDI. Here’s a screencap of the pitch-correction program Melodyne, a close cousin of Auto-tune.

The lines show the actual sung pitches, and the orange blobs show the notes the program thinks the singer meant to hit. The blobs’ thickness shows volume. You can drag and drop the blobs and redraw the lines at will to alter the melody to your heart’s content. Melodyne even transcribes the performance to standard notation and MIDI for you.

High and low

We’ve made up our collective mind that faster frequencies should be spatially represented as being “higher,” and that slower ones should be spatially “lower.” It seems so reasonable, but really it’s totally arbitrary, and doesn’t even line up with physical experience. On the piano, the high notes are on the right and the low ones on the left. On the guitar, the “low” E string is physically located above the “high” one. The fingerings for higher and lower notes on wind instruments don’t correspond to a simple higher-lower axis either.

Absolute pitch is a straight line ladder, but pitch class is circular. The truest representation of pitch space is a helix.

Other ways to conceptualize pitch space

High and low aren’t the only metaphors we use for faster and slower vibrations. Like I said, pitch class is circular.

But the circle is really just replacing up/down with clockwise/counterclockwise. There are other ways to conceptualize pitch. We intuitively experience changing pitches as moving closer and further, or inwards and outwards. We also think of higher pitches as brighter and lower pitches as darker. Players of stringed instruments sometimes tune their upper strings a little bit too high on purpose, producing an effect known as brilliance.

Time

It’s a universal convention that notation shows time moving from left to right. But that’s not the only possible axis to use. How about forwards and backwards instead? That’s the paradigm in rhythm games like Dance Dance Revolution and Guitar Hero. The purest realization of this concept is in a game called FreQuency.

The game even allows you to construct your own remixes.

I like this tunnel metaphor and would like to see it extended into a full-blown production environment.

Waves

Pitches are sine-wave vibrations, and you can visualize them as such.

Sine waves wouldn’t make for very a helpful music notation, but they do help you understand what’s going on scientifically when you physically hear something. They’re even better animated:

See all of Wikipedia’s animated drum heads.

Waveforms

Audio editors show music as amplitude waveforms, blobs that get wider where the sound is louder. Here’s the Funky Drummer break in Recycle. The blue blobs show drum hits. These amplitude blobs don’t tell you much about the musical content except for timing and volume. But Recycle was meant for drum loops, where timing and volume are the only information you really need.

Here’s a graphic I made showing how you hear the Funky Drummer as it’s looping:

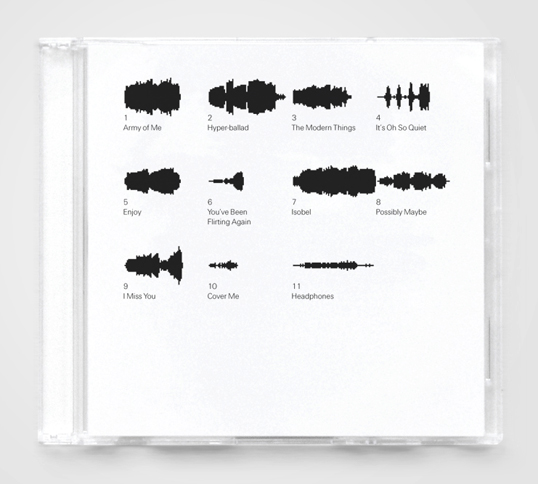

In a post on Design Observer, Rob Walker discusses the waveform as the new icon for music, replacing the stylized eighth notes or records that have done the job in the past. The SoundCloud player uses an attractive waveform graphic that helps the listener track where they are in the song by following the volume peaks. There’s even a SoundCloud group called Pretty Waveforms.

The waveform has the potential to move from purely functional settings to more decorative ones. Here’s a waveform-based labeling concept by Joshua Distler, showing the tracks on Post by Björk.

Music theory and networks

I’ve always thought it would be cool to use networks to conceptualize music theory, and have made a few attempts at doing so. Here’s a comparison between the circle of half-steps and the circle of fifths, which are involutes of each other:

Here’s a map of the chord progressions in “Giant Steps” by John Coltrane.

Here’s a map of the chord progressions in “Giant Steps” by John Coltrane.

And here’s a flowchart showing how you can figure out what scale or mode you’re hearing.

It would be way cooler to have more abstract three-dimensional interactive visualizations showing how chords, scales and melodies function. Leonhard Euler showed how you can represent tonal harmony as a lattice with the topology of a torus, as shown in this animation. Red lines show major thirds, green lines show minor thirds, and blue lines show fifths:

I have ambitions of my own in this area, but so far, I lack the programming skills to realize them. Others are taking some exciting strides, though. Dmitri Tymoczko made waves for getting the first music-related article published in Science about his topological visualization methods for tonal harmony. I can’t quite wrap my head around his ideas, but they’re intriguing.

Here’s an illustration by Aniruddh Patel from his paper, “Language, Music, Syntax And The Brain.” Again, I’m not totally clear what it all means, but I plan to investigate further.

Other theorists have attempted to use color to show harmonic function. Scriabin invented a “keyboard of lights” for that purpose, though it didn’t really catch on.

Visualizing musical form and structure

I like to use simple color-coding to keep track of which section is which while working on a song. Yellow is for intros and outtros, blue is for verses, green is for choruses and orange is for instrumentals and breakdowns.

Edward Tufte shows some more sophisticated song structure visualizations on his forum:

The Shape of Song project by Martin Wattenburg shows repetition within a piece of music. Here’s his visualization of “Like A Prayer” by Madonna.

Here’s Wattenburg’s visualization of Beethoven’s “Für Elise.”

Speculation

Here’s an entertaining video showing how you can create a happening drum machine sequence using counting in binary by Niklas Roy.

Wouldn’t this graph coloring system make a cool music notation or interface?

Visual Complexity has many more ideas like this one.

I feel like we’ve barely scratched the surface of useful and attractive schemes. Are there other cool visualization methods I should know about? Hit the comments.

Updates

John Clover hipped me to this post, which overlaps heavily: Amazing Music Visualizations and Teaching

I just had the chance to play with some of Björk‘s Biophilia song/apps. Some of them are groundbreaking interactive visualizations; some are just entertaining and groovy; some are baffling but deserve points for creativity. All the way around, it’s a remarkable experiment, one that I think is going to be influential.

Thanks for taking the time to share your work.

After 50 years I’m still struggling to understand musical theory. To me (I think) that means the relationship between a starting note (root), a second note, and depending on the interval, the emotional resonance/feeling/response ??!!

For me the traditional music language isnt intuitive. # b dim 7 etc. In interval terms are we talking 1.0, 1.5, 2.0, 2.5 ….. 7.0 in modern terms? Are you brave enough to invent a new language?!

Regards

Chris

Where did all the lovely images go?

I’m undergoing a transition in my image hosting away from Flickr. Should have everything fixed in a day or three.

Here are a couple: animatednotation.com and animatednotation.blogspot.com

This write-up is terrific, really inspiring for educators and visualizers. Thanks for sharing all of your insights. Fun to read all of your scholarship from afar. Hope all is good!

Hello!

Great article. What about recent iPad/Android apps which in a way are both visualization and interfaces? Some examples: Nodebeat, Reactable, Orphion… there are surely others.

Also, great blog!

Thanks!

JP

Good point. I think the convergence between interface and visualization is exciting. Glad you’re enjoying the blog.

As soon as I realized that the “Western notation” example at the top of the article was “Chameleon”, I was hooked. Subtle…and brilliant.

And what a WHOPPER of an analysis. I’m impressed by the rich depth of this article!

Much appreciated.

I was doing a semi musical clinic with the Navy Band in New Orleans at an elementary school about 4 years ago. We had the “ceremonial band”( Sousa music ) perform in front of a bunch of 2nd graders. At one point, one of our tuba players went to the front with a saxophone player. The saxophonist played some high pitched alto notes, then the tubist played some really low notes. Then the sax player asked the audience a seemingly elementary question: “Which one sounds higher?”. Almost all of the kids pointed at the tuba. They said “No, let’s try again.” They played their respective instruments again, but the kids still pointed to the “higher” pitched tuba. The children related the Tuba’s sound and image to a very tall figure and the saxophone to a very small and low to the ground creature. It didn’t make sense to me at first, but I now realize there is no “higher” pitch. There is only the way our culture interprets and visualizes pitch.

Great story.

Great article, thanks for this. If you have some interesting ideas and are looking for musical programmers to connect with, you should check out the Overtone community http://groups.google.com/group/overtone/ we’re always looking for cool ways to represent musical notation. Currently we work purely with text

but are looking for exciting ways to break out of that where appropriate.

Will definitely check you guys out!

I came across this recently. I’m not terribly convinced, mind.

Had never heard of it before. I like the idea in the abstract, would need to sit down and try it out to really evaluate.

I liked how this flowed from the more familiar through to the more abstract – nice and accessible – will be forwarding it to the 13 year old trombone player! ;-)

I still fondly remember the “psychedelic” screen savers that were hugely popular at the end of the 90’s and early 00’s – I think I bought Andy O’Meara’s Whitecap several times over the years.

http://www.soundspectrum.com/

Of course – now I’m going to have to go buy it again!

The 13 year old trombone players of the world are exactly who I hope to reach with this stuff. There is something magical about those psychedelic screen savers, a whole art form unto themselves.